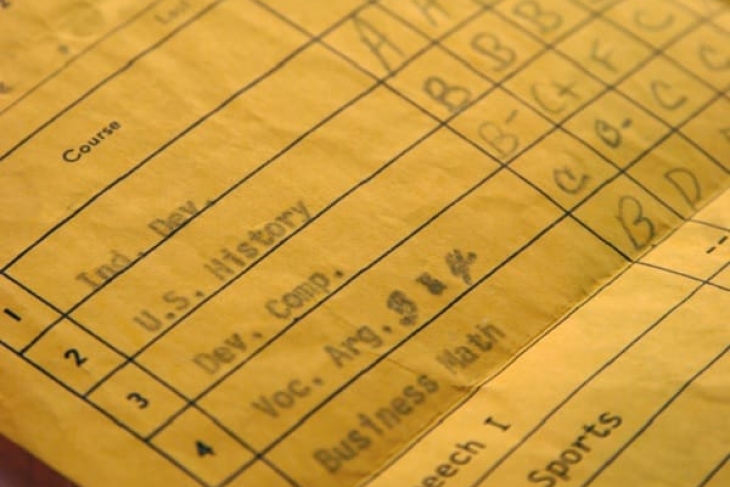

At this month’s meeting of the State Board of Education, members debated a draft proposal for a new set of graduation requirements that would give students many paths to graduation. One would use grade point average (GPA) as an indicator of competency. Students would have to earn an average GPA of 2.5 or better for at least two full years of high school math and English, as well as a 2.5 or better across four semesters of any single subject included in the “well-rounded” category that comprises science, social studies, art, and foreign language. The upshot is that a student could earn an Ohio diploma by earning a 2.5 GPA in certain courses (not overall) and playing a sport for four years.

It shouldn’t come as a surprise that Ohio education officials want to include GPA in state graduation requirements. The strategic plan for education released earlier this month called for the state to develop multiple ways for students to demonstrate their competency, and the main goal statement purposely shies away from measures like standardized tests. There is also research that shows a link between college success and a students’ high school GPA. For example, an Institute of Education Sciences study found that high school GPAs are an “extremely good and consistent predictor of college performance,” and that they “encapsulate all the predictive power of a full high school transcript in explaining college outcomes.”

But there is also evidence that indicates widespread grade inflation. A recent Fordham-published analysis by Seth Gershenson of American University examined public school students taking Algebra I in North Carolina and found that a significant number of students who received high grades in that class did poorly on the state’s end-of-course (EOC) exam. Specifically, more than one-third of students who received B’s from their teachers failed to score proficient on the exam. That’s a pretty big deal, since the state’s Algebra 1 EOC is not only strongly predictive of math ACT results, but more predictive than course grades. Interestingly, the data show that more grade inflation occurred in schools attended by more affluent students. Research from the College Board, as reported by The Atlantic, found similar results: Students enrolled in private and suburban public high schools were awarded higher grades than their urban peers despite similar levels of talent and potential. In fact, between 1998 and 2016, the GPAs of students at private and suburban public high schools went up even as scores on the SAT went down.

Evidence of grade inflation points toward at least one serious issue with using GPA as a graduation pathway. Allowing students to graduate based on their average in certain classes instead of an overall GPA puts enormous pressure on the educators teaching those classes. If a student’s diploma is on the line, and it comes down to assigning an accurate grade or a slightly inflated one, teachers will be stuck between a rock and hard place. Do they choose honesty, and face potential backlash from administrators worried about graduation rates or parents worried about their kids? Or do they inflate the grade a little and sidestep all the drama and potential consequences? Without an objective third party—like state tests, the ACT, or industry certifications—certain high school teachers could become the sole gatekeeper of diplomas.

Ohioans saw this play out firsthand a few years ago, when administrators in Columbus City Schools took it upon themselves to withdraw students with poor attendance and low test scores and alter student grades. The auditor’s review of a sample of letter-grade changes found that over 83 percent of the data tested had no documentation that supported the need for a grade change. One assistant principal changed more than 600 grades from failing to passing because he believed “no student should get an F.” Ohio isn’t the only place this has happened, either. The scandal at Ballou High School in Washington, D.C.—and the intense pressure that was put on teachers there—offers a similar warning.

To be clear, the conclusion to draw here is not that teachers and administrators are bad guys ready to game the system, or that standardized tests like EOCs or the ACT/SAT are way more reliable than GPAs and grades. The point is that each measure has its own value and place. In fact, there are studies that show that a student’s GPA and her composite ACT score are more predictive of college success than either option alone. There’s a reason that the vast majority of colleges still ask for multiple measures of a student’s ability, including GPA, class rank, test scores, and letters of recommendation. Likewise, there’s a reason that Ohio’s current graduation requirements depend on passing certain courses (which translates to a decent GPA) and demonstrating competency via an objective assessment. A system that considers both ensures not only the honesty of both measures, but provides a clearer picture of the student as a whole.

Of course, none of this changes the fact that the recently proposed graduation requirements would allow students to graduate based almost solely on course grades and their resultant GPA. In a previous piece, I outlined a few other reasons why policymakers should be careful with GPAs. Since the issue is once again at the center of a statewide debate, it’s worth reviewing them again.

First, comparability is a serious problem. GPA calculations vary widely across schools—there is no standard way of computing them, or verifying that a 2.5 at Whetstone High is the same as a 2.5 at New Albany High. Even colleges that have gone test-optional, like the University of Chicago, have admitted that GPAs don’t tell them much: “Since one school can be very different from another, it is useful to see evidence of academic achievement that exists outside of the context of your school,” their admissions page says.

Second, most of the research on the predictive power of GPAs only considers how students perform once they get to college. That ignores a huge part of the student population—students who don’t enroll in college and thus don’t have postsecondary outcomes to compare to their high school GPA. This likely includes a large portion of the cohort of students who are unable to pass Ohio’s end-of-course exams. Some of these students may have wanted to enroll in college but could not because their applications were denied. Others may have gotten accepted to a school and then simply chosen not to go. Without an accurate way to measure whether these students’ GPAs would also have predicted college success, it’s difficult to say how useful GPA is as a predictive tool for everyone.

Third, there is very little research linking GPAs and workforce success. Ohio graduates work in thousands of different jobs in dozens of fields, and because each field and role is unique and complex, it’s difficult to determine on a broad scale whether students are adequately prepared for the workforce. In fact, the only clear data Ohio seems to have on gauging career readiness come from the WorkKeys assessment—a standardized test. It seems like a serious oversight to give students a diploma based on their GPA when so many employers have said that graduates—many of whom have decent GPAs—are missing important skills.

Fourth, it’s inaccurate to claim that GPAs are more predictive of college success than our current state tests. The majority of studies that examine whether GPAs predict college success use placement tests (like ACCUPLACER) or readiness tests (like the ACT or SAT) as their points of comparison. Some studies use state tests, but those come from other states. No study yet examines how well Ohio’s EOCs can predict success at the collegiate level. If policymakers are determined to claim that GPAs are a better indicator of readiness than state EOCs, then they need to commission independent research to prove it.

Standardized tests don’t tell us everything we need to know about student potential, achievement, or ability. But neither do GPAs. As the old saying goes, it’s important to trust but verify. Given that GPAs are subject to grade inflation, aren’t easily comparable across schools and districts, and don’t clearly measure workforce readiness, policymakers should remain skeptical of relying on them as a graduation standard.